Fields of Research

- Intelligent Control - Reinforcement Learning

- Active Vibration Control

- Robust adaptive control techniques – Sliding Mode Control

- Robot localization, motion planning and motion control

- Disturbance Rejection Control

- Back Analysis

- Full Waveform Inversion

- Structural Health Monitoring

- Other fields

Intelligent Control – Reinforcement Learning

The application of Artificial Intelligence (AI) and Reinforcement Learning (RL) techniques has recorded a tremendous interest in recent years. The idea of learning and optimizing from experience could be effectively used and verified in control strategies in simulation. Yet the execution of these techniques directly in real-life applications can be challenging due to the presence of uncertainties and disturbances. Our aim is to develop and implement concepts from AI and RL to design effective control strategies and test them in actual systems in our lab. The inverted pendulum (IP) system is one of the most popular benchmark devices to test new control algorithms. An RL-based control strategy coupled with a PID controller is tested to swing up and balance the linear IP in our lab. The RL Agent is trained in simulation before applying it to the real experimental system. Current work involves online training of the Agent using different algorithms.

Description of the figures

Left: Experimental set-up with the inverted pendulum

Right: Schematic of the control strategy where the Agent is trained in simulation and then deployed in the real system along with a PID controller to stabilize the balance.

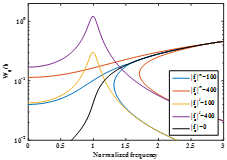

Active Vibration Control

Active Vibration Control (AVC) as a relatively mature field of research deals with continuous mechanical/civil systems under unwanted environmental excitations. An intriguing topic is to relate the modelling tools available for these structures such as reduced order finite element method or more importantly system identification method with active and semi-active control systems. Among other topics, our research is aimed at investigation of the nonlinear behavior of structures for uncertainty quantification. In that way the linear models obtained from system identification methods in time-domain and frequency-domain can be improved. As a result, less conservative model-based control approaches can be developed that address AVC in a more efficient manner.

|

|

|

Robust adaptive control techniques – Sliding Mode Control

Sliding mode controller (SMC) is widely used in uncertain linear or nonlinear systems due to its robustness to uncertainties as well as to external disturbances. However, several disadvantages are related with SMC, like the chattering problem, slow response in fast variant of fault, getting the upper bound value of unknown function (disturbance, uncertainty or fault) or slow convergence. To overcome such disadvantages, we propose novel SMC by improving sliding surface, reaching law, or hybridizing with other controllers such as backstepping controller, neural network controller, PID controller to improve SMC performance and alleviate the chattering in control input.

Robotic manipulators play a crucial role in several industrial sectors and have served as an interesting benchmark in the development and evaluation of new nonlinear controllers. The main purpose of the robot controller is to reduce the trajectory error of the robot manipulator. However, the complexities of dynamics, non-linearity, uncertainty, and disturbances dramatically degrade the tracking performance of robots. Therefore, in implementing our novel sliding mode controller a robot manipulator is used as a benchmark for the evaluation and validation to of the controller effectiveness.

Description of the figures

Left: Models of robot manipulators, courtesy of the Industrial University of Ho Chi Minh, VietNam.

Middle: Trajectory tracking of the manipulator joint. Controllers can provide good tracking performance and the manipulator can follow desired profiles after different transient time.

Right: Control input torque of the manipulator joints. In comparison with conventional SMC, less oscillation in control inputs is observed and the chattering is eliminated.

Robot localization, motion planning and motion control

In the field of robotics, the problems of "where am I", "where should I be", and "how should I reach there" are very challenging, especially in a dynamic and dense environment. We concentrate on mobile robots as they are well-researched and used widely for prototype testing of various sensor fusion, path planning, and motion control. Due to the fact that each sensor has limited accuracy, it is known as the state-of-the-art to fuse the sensory data in order to obtain a reliable estimation of the robot states such as its pose, velocity, and acceleration. For example, wheel odometry is subjected to slip and low accuracy at low-velocity ranges while GNSS is not applicable indoors or in environments with discontinuous correction data receiving.

While the state-of-the-art is mature in using stochastic schemes for state estimation based on extended and unscented Kalman filter, a flexible library that can incorporate an arbitrary sensor profile for fusion purposes while guaranteeing certain accuracy is still a challenging problem. This topic is one of the challenges that has been actively researched in our lab. Additionally, the problem of motion planning has been tackled in our research group. Given a start pose of the robot, a desired goal pose, a geometric description of the robot, a geometric description of the world, find a path that moves the robot gradually from start to goal while never touching any obstacle. Although the path planning problem is defined in the regular world, it lives in another space, namely the configuration space. The research in planning and control modules is being actively tackled in our lab as a combination of nonlinear real-time optimization and robust/nonlinear tracking control.

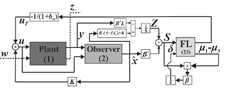

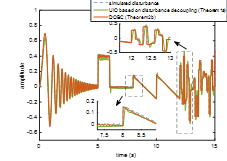

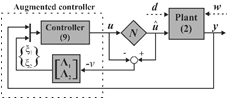

Disturbance Rejection Control

In modern control theory, unknown input signals such as measurement noise, input/output disturbances, and modelling uncertainties are known to be the main sources of the system performance deterioration. Robust control techniques as the primary method in addressing these issues based on worst-case analysis seem to lead to conservative results. Unknown input observation (UIO) and disturbance estimation can provide a less conservative approach that detects and rejects the disturbance simultaneously. We are developing DRC methods for linear and nonlinear time-invariant systems based on the combination of the disturbance decoupling techniques and UIO. Such methods due to the limitations on actuator elements in system may lead to nonlinear behaviors such as actuator windup that needs to be addressed separately.

|

|

|

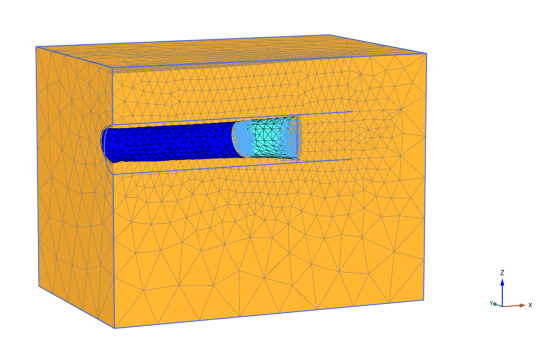

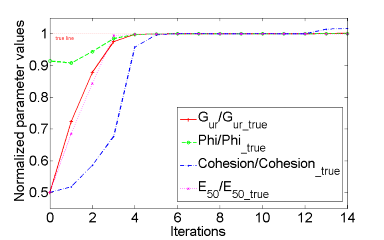

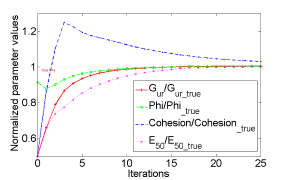

Back Analysis

Back analysis is a model based inverse problem in geotechnical engineering to infer the soil parameters based on measurement of available states (typically displacements). This methodology, based on model updating in order to reduce the mismatch between the model outputs and measured data, although long established, is not often carried out in geotechnical projects mainly due to the computational burden associated with running excessively large number of elastoplastic finite element (FE) analyses. In our research we develop methodology for solving the back analysis problems in mechanized tunneling based on Extended Kalman filter (EKF), Unscented Kalman filter (UKF) and the Particle filter (PF). Efficiency analysis shows that the EKF and the UKF are very suitable for the back analysis in tunneling problems since, unlike other optimization methods, they need a very limited number of FE runs to converge. Due to that efficiency, the sophisticated elastoplastic FE model can be used during execution of the back analysis without the need for building an approximated surrogate model. A 3D FE sophisticated tunnel excavation model and the convergence behavior of the EKF and the UKF are shown below.

|

|

|

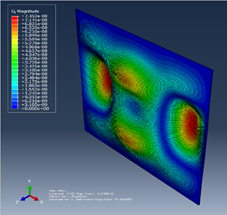

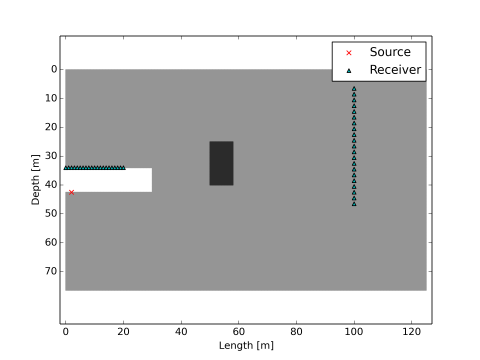

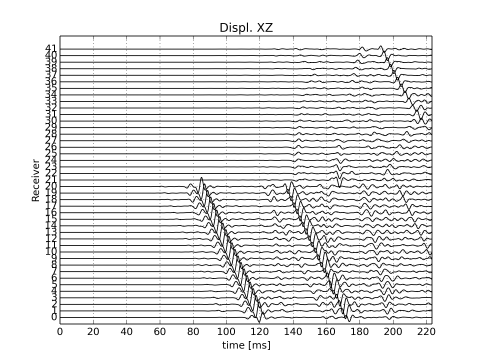

Full Waveform Inversion

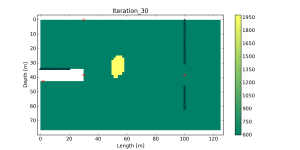

The determination of the inner structure of materials is a highly important subject in various research fields as for instance in mechanics, civil engineering, geophysics or medical imaging. In order to find the distribution of material properties in a non-destructive manner, wave propagation methods can be applied – seismic waves can be released, and the signal response can be recorded and evaluated. Full Waveform Inversion (FWI) is a methodology used for acquiring high-resolution models by exploiting – as the name suggests – the full recorded waveform. Our research is focused on an application during mechanized tunneling (reconnaissance), where seismic waves released from inside the tunnel or from the Earth surface are inverted in order to resolve the region in front of the tunnel face. Furthermore, our research is based on the inversion with Bayesian (statistical) methods rather than with gradient-based (adjoint) methods. The inversion methods exploit hybrid approaches based on Unscented Kalman Filter supported by the level set parametrization or combined with simulated annealing. The reconstruction process of a subsurface scenario with a hard rectangular disturbance using simulated vertical displacement waveforms and the Unscented Kalman Filter (UKF) supported by the level set parametrization is shown in the figures below.

In addition to numerical validation, the full waveform inversion methods are validated experimentally. Since tunneling field data is difficult to obtain, a small-scale setup has been developed in our Laser laboratory, in which ultrasonic data is acquired by making use of laser interferometry. By investigating small-scale specimen which are in a certain sense similar to large-scale tunneling models, our methods are validated and prepared for an application during mechanized tunneling. The bottom left picture shows again the results of a numerical validation, where the grey tones demonstrate the determined structure and where the true geometry is illustrated by the black dashed lines. The results show that the applied method is generally able to deliver highly precise reconstructions of the subsoil. The middle picture shows a specimen which is constructed with the aim to have similarities to a field model. The right figure shows the determined inner structure for the cross-section of the specimen, where the expected true geometry is again illustrated by the black dashed lines. Also here, very satisfying results are achieved, which demonstrates the potential of the methods for an application during mechanized tunneling.

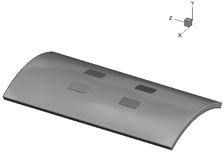

Structural Health Monitoring

Structural Health Monitoring (SHM) is the continuous assessment of structural integrity using sensors that are permanently installed. Furthermore, SHM enables condition-based structural maintenance to replace the current inefficient schedule-based maintenance practice. This technique frequently comprises (1) examining the structure, (2) extracting damage-sensitive features, and (3) developing appropriate approaches for reaching final conclusions on the occurrence of any damage, its location, and severity.

Our research aims to investigate and create unique algorithms that make a SHM scheme more resilient and practical.

Damage detection in thin plates is being researched for this purpose using ultrasonic wave propagation principles. A variety of approaches, ranging from triangulation-based methods such as delay and sum to machine learning-based algorithms, are being developed and evaluated toward this end.

Description of the figures

Left: Separability of damage scenarios after and before our introduced method by reducing the dimension of the input signal to two.

Middle: Experiment - a layout of the ultrasonic testbench using piezopatches and TiePie DAQ.

Right: Detected damage location by DaS algorithm and the actual damage coordinate.

We also develop methods of active structural health monitoring and damage detection in concrete structures using piezoelectric smart aggregates and damage assessment based on damage indices obtained through wave propagation.

This includes the simulation and experimental investigation of different concrete models. Excited waves are propagated through structures and observed at different observation points. Postprocessing of the acquired signals provides the information on the damage existence and location.

Our methods include damage detection both in the 2D models and 3D models. Abaqus software is used for the numerical simulation, while the postprocessing of the acquired signals is performed by coupling MATLAB and Python codes. Experimental investigation is performed in the in the MAS laser laboratory using the non-destructive laser examination (ultrasonic range).

Description of the figures: Damage localization in 2D concrete plates

Description of the figures

Left: wave propagation in a concrete beam. Right: piezoelectric smart aggregates

Other fields of research

- Overall design of smart adaptive structures and systems

- Controller design for active vibration control (robust adaptive control)

- Real time control and simulation of Hardware-in-the-Loop Systems

- Machine Learning / Artificial Neural Networks

- Model and parameter identification (numeric und experimental)

- Active Structural Acoustic Control

- Machine diagnosis

- Structural Health Monitoring / Non-destructive testing

- Full wave form inversion based reconnaissance

- Bayesian filters based parameter identification

- Laser ultrasound based research