ABSTRACT

Most approaches for speech signal processing rely solely on acoustic input, which has the consequence that spectrum estimation becomes exceedingly difficult when the signal-tonoise ratio drops to values near 0 dB. However, alternative sources of information are becoming widely available with increasing use of multimedia data in everyday communication. We suggest to use video input as an auxiliary modality for speech processing by applying a new statistical model – the twin hidden Markov model. The resulting enhancement algorithm for audiovisual data greatly outperforms the standard audio-only log-MMSE estimator on all considered instrumental speech quality measures covering spectral and perceptual quality

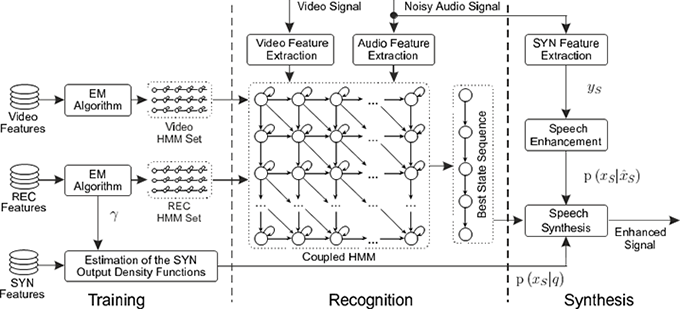

THMM-based audio-visual speech enhancement

Framework of the twin-HMM-based audio-visual speech enhancement (THMMB-AV-SE)

Examplary Results

|

|

|

|

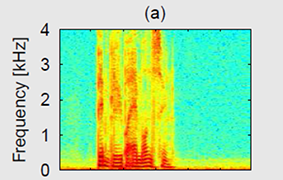

(a) Spectrum of the GRID sentence "BIN BLUE BY M ONE SOON" uttered in clean conditions.

(b) The same sentence with added babble noise at 0 dB SNR.

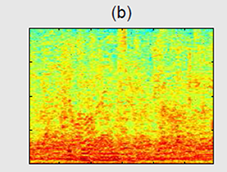

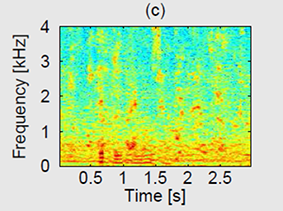

(c) The log-MMSE enhanced spectrum.

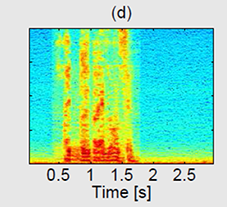

(d) The filtered noisy spectrum after THMMB-AV-SE.

Instrumental Quality Measure

Evaluation of twin-HMM-based speech processing in terms of segmental SNR, perceptually motivated quality measures PESQ (Perceptual Evaluation of Speech Quality), and STOI (Short Term Objective Intelligibility measure)